Note: This is cross-posted from my weekly newsletter in an attempt to both to make it easier to read this via RSS feed and to have this in my own independent archives. You can subscribe here to get it delivered to your inbox.

×

Hello Friends,

Time for a long-overdue check-in. Before jumping into details, a quick reminder who I am and why this newsletter might be in your inbox: My name is Peter Bihr and you may have found me via my website thewavingcat.com or Twitter (@peterbihr), two places where I try to explore how emerging tech can benefit society. Or maybe through the ThingsCon network, which I co-founded. I’m also co-host of the (occasional) Getting Tech Right podcast. And while I’m very happy that you’re reading this, as always, the unsubscribe is always just a click away down in the footer.

Also, as always, I love hearing back from you. Feel free to just hit reply on this email or reach out for a proper chat anytime.

Peter

×

Updates from the engine room

It’s been a busy year so far, and lots has been happening all around. Some quick updates from the engine room about what I’ve been up to — if some of it resonates with you, ping me and let’s chat!

Mercator Foundation

For well over a year now, I’ve been working with Stiftung Mercator’s Carla Hustedt and her Digital Society team. In my opinion, they’re hands-down the best actor in Germany in this space, and I feel lucky to get to be part of this endeavor. Also/specifically, they have a focus on civil society capacity building, which I believe is essential at this point in time. With the team, we’re in the final stages of setting up an ambitious new initiative which I can’t wait to share more of once it’s ready to go public, probably in just a few months.

If you speak German, and work in a non-profit where you need to understand advocacy and policy work, you might also find this useful: We put together a handbook for civil society organizations called “Digitalpolitische Gesetzgebung wirkungsvoll begleiten — ein Leitfaden für die Zivilgesellschaft”. (A translation into English is upcoming.) It’s a quick hands-on guide on how to engage with the political process to represent your organization’s or community’s interests and needs, and to help get your most important topics onto the political agenda.

European AI Fund

I was very happy about my stint as Interim Director of the European AI Fund, a comparatively small but fantastic initiative to help strengthen civil society around AI issues, and to galvanize the philanthropic sector around societal aspects of AI as well. My interim role endet as planned when the brilliant Catherine Miller took over as the new permanent director, and I couldn’t be happier about this outcome. Also, I felt quite honored when a board member kindly sent me a note saying that this has “literally been the smoothest transition of this nature I’ve ever experienced. Amazing work.” And of course that I get to continue working with Catherine and the fund to continue to develop their strategy going forward.

Getting Tech Right

The Getting Tech Right podcast is an attempt to explore how we can think better about tech & society. It’s aimed at policy makers and funders, at decision makers at foundations and in the public sector. It’s also currently on hiatus; I’ve been thinking about a new season for some time now, but am still waiting for a good opportunity (read: the necessary time) to tackle this project.

What else?

- At RightsCon, we (the European AI Fund, Stiftung Mercator and myself) ran a session in which we explored no-brainers for funders in the digital society space. Lea Wulf over at Mercator kindly wrote up our findings (cross-posted on my site here). Spoiler: Long-term core funding is key; as is strong collaboration between funder and grantees as well as among grantees; and great relationships depend on great accountability.

- UN Habitat published a series of playbooks as part of their People Centered Smart Cities flagship program. I was happy to be a peer reviewer last year for the first book in the series, Centering People ins Smart Cities (PDF). Here’s a little more background.

×

Small bits & pieces

What else is going on? Some of the things I’ve been thinking about that are being discussed across my backchannels.

Is standardization the new frontier for civil society?

It appears that a lot of the work of regulating emerging tech is moved from the legal texts out into standards bodies. Often for good reasons, but it still leads to issues. I tried to explore some of them in this blog post.

AI as a forcing function for societal debates

I’ve been hearing so much hand-wringing about the way AI is discussed, especially AI bias. Because more often than not, it’s like only discussing the tiniest manifestation of a larger issue. Is AI bias our problem, or structural racism? Is it this algorithm or is it discrimination at the workplace that’s encoded in the algorithm? You can probably tell where I’m going with this: We discuss a zoomed-in version of a problem because some new tech tool forces the debate, but the issues are so much larger, so much more systemic, that the debate tends to miss the point. It simply feels overwhelming to tackle these ginormous problems, and it seems frankly unfair to put the burden of doing so at the poor folks trying to set up some tools their organizations told them to; it’s a mismatch of scale, of timescale, of mandates vs problems. But I do thing that thinking of AI systems as a forcing function for necessary societal debate can be a helpful lens for analysis. Some thoughts here.

Enforcement of digital regulation

With the EU’s recent regulatory acts DSA/DMA/AIA (Digital Services Act, Digital Markets Act, Artificial Intelligence Act) in various stages of completion or negotiation, the elephant in the room is enforcement: Are the enforcement mechanisms going to be clear and strong enough to have teeth, and hence might have a chance to do that they’re supposed to? Or will it be like the EU cookie law (ePrivacy Directive), a well-intention law that turned out so weak that most consumer sites made a mockery out of it by manipulating their users overwhelmingly into just “consenting” to the exact things this law was meant to prevent? A while ago I wrote up some very early thoughts on this but this is a debate that is going strong, and I can only say this with confidence: I’m very, very interested in seeing how this will play out.

×

If you’d like to work with me or have a chat to explore collaborations, let’s chat!

×

Who writes here? Peter Bihr investigates how emerging technologies can benefit society. This mission is at the heart of his work as an independent advisor primarily with foundations, non-profits & the public sector at the intersection of technology, governance, policy, and social impact. He is a special advisor to Stiftung Mercator’s Center for Digital Society. Peter co-hosts the Getting Tech Right podcast. Peter served as Interim Director for the European AI Fund. He co-founded ThingsCon e.V., a non-profit that advocates for responsible Internet of Things (IoT) practices. Peter was a Mozilla Fellow (2018-19) investigating trustable technology (IoT), and an Edgeryders Fellow (2019) studying smart cities from a civil rights standpoint. He was Managing Director of the independent research firm The Waving Cat GmbH from 2015 to 2021. He wrote View Source: Shenzhen (2017) and Understanding the Connected Home (with Michelle Thorne, 2015). Postscapes named him a Top 20 IoT Influencer (2019). He blogs at thewavingcat.com and tweets at @peterbihr.

If you’d like to discuss ideas, let’s have a chat.

×

Know someone who might enjoy this newsletter? Please feel free to forward your copy or send folks to tinyletter.com/pbihr.

×

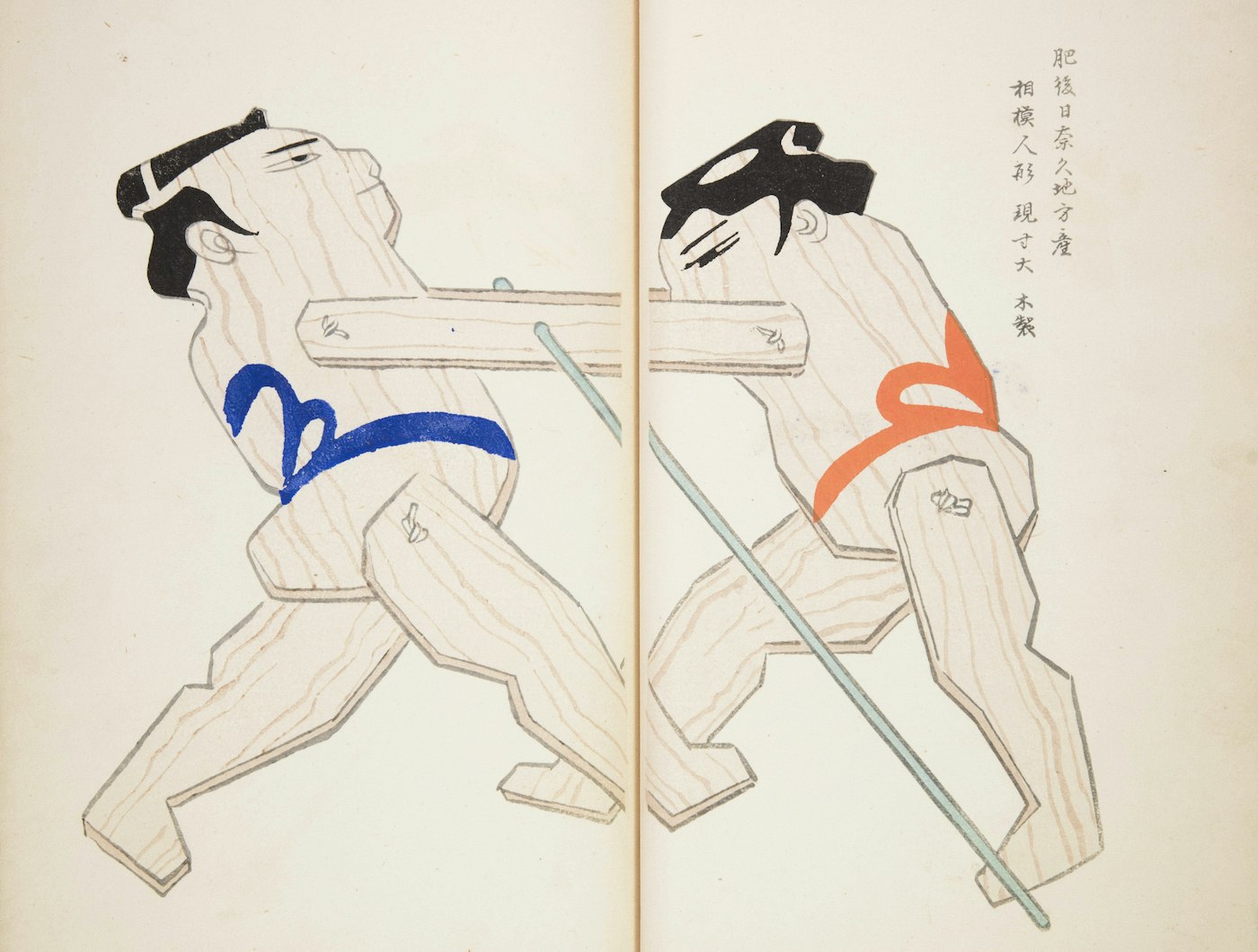

Image from Unai no tomo: Catalogues of Japanese Toys (1891–1923), Public Domain Review